On Arguments from Authority

Saturday, 29 April 2023Most people who claim that argument from authority is fallacious would, perversely, argue for that claim by reference to the authority of common knowledge or of what were often taught. A fallacy is actually shown by demonstrating a conflict with a principle of logic or by an empirical counter-example. A case in which an authority proved to be wrong might be taken as the latter, but matters are not so simple.

When one normally makes a formal study of logic, that study is usually of assertoric logic, the logic in which every proposition is treated as if knowable to be true or knowable to be false, even if sometimes the study itself deliberately treats a propostion as false that is true or a proposition as true that is false. In the context of assertoric logic, an argument from authority is indeed fallacious.

But most of the propositions with which we deal are not known or knowable to be true or false; rather, we find that some propositions are relatively more plausible than others. Our everyday logic must be the logic of that ordering. Within that logic, showing that a proposition has one position in the ordering given some information does not show that it did not have a different position without that information. So we cannot show that arguments from authority are fallacious in the logic of plausibility simply by showing that what some particular authority claimed to be likely or even certainly true was later shown to be almost certainly false or simply false.

Arguments from authority, though often not recognized as such, are essential to our everyday reasoning. For example, most of us rely heavily upon the authority of others as to what they have experienced; we even rely heavily upon the authority of n-th-hand reports and distillations of reports of the experiences of others. And none of us has fully explored the theoretic structure of the scientific theories that the vast majority of us accept; instead, we rely upon the authority of those transmitting sketches, gists, or conclusions. Some of those authorities have failed us; some of those authorities will fail us in the future; those failures have not and will not make every such reliance upon authorities fallacious.

However, genuine fallacy would lie in over-reliance upon authorities — putting some authoritative claims higher in the plausibility ordering than any authoritative claims should be, or failing to account for factors that should lower the places in the plausibity ordering associated with authorities of various sorts, such as those with poor histories or with conflicts of interest.

By the way, I have occasionally been accused of arguing from authority when I've done no such thing, but instead have pointed to someone who was in some way important in development or useful in presentation of an argument that I wish to invoke.

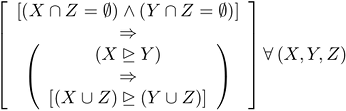

in which X, Y, and Z are sets of events. The underscored arrowhead is again my notation for

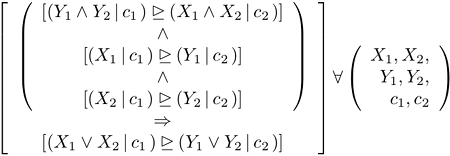

in which X, Y, and Z are sets of events. The underscored arrowhead is again my notation for  To get di Finetti's principle from it, set

To get di Finetti's principle from it, set